From Automation to Autonomy: Is the Clinical Lab Ready for AI as an Active Partner?

It’s 7:40 a.m. in the lab.

A STAT sample is waiting. A batch is ready for validation. Turnaround time is already being questioned. Then the system flags an exception and suggests a next step, not like a tool, but like a colleague who expects you to follow.

That is the shift labs are facing right now. AI is no longer just automation. It is starting to influence priorities, workflows, and diagnostic confidence.

Study note: This MDForLives insight pulse reflects perspectives from lab managers, supervisors, and directors across North America and Western Europe, on where AI is already proving useful, what is slowing scale, and which decisions still feel too high-stakes to delegate.

If automation made labs faster, this next phase is sharper: when AI is confident, who owns the call?

Are labs already deploying AI, or still watching from the sidelines?

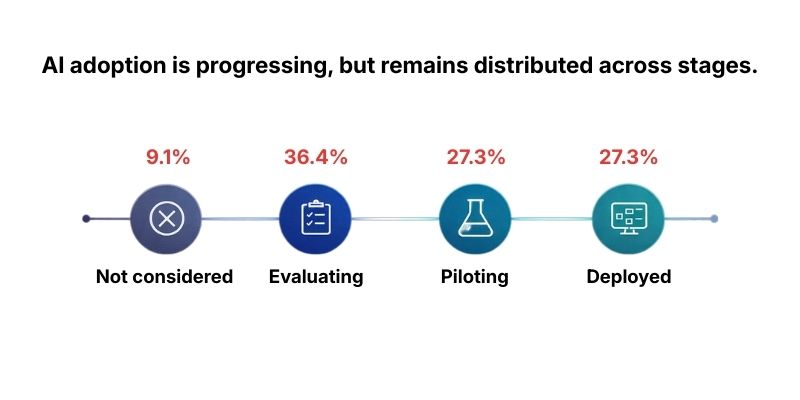

AI adoption is no longer a single question of “yes or no.”

In this pulse, the most common posture is evaluated but not implemented (36.4%). At the same time, 27.3% say AI is actively deployed across workflows, and 27.3% report it is being piloted in select areas. Only 9.1% say AI is not currently considered.

The insight is not “labs are slow.” It is more specific: labs are treating AI like a new capability that must earn permission, step by step. Evaluation is not hesitation. It is governance in motion.

Where does AI feel most valuable right now: diagnostics or operations?

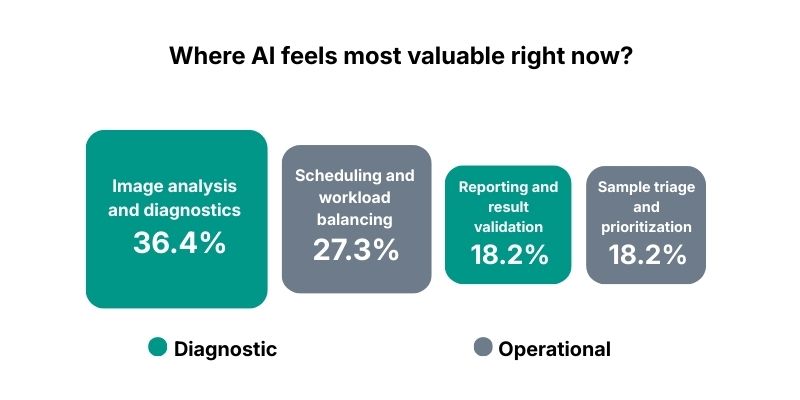

Once a lab moves from evaluation to pilots or deployment, the next question becomes more practical: where should AI earn its place first?

The top “immediate value” signal is image analysis and diagnostics (36.4%). Next is scheduling and workload balancing (27.3%). Sample triage and prioritization (18.2%) and reporting and result validation (18.2%) follow close behind.

The pattern is revealing: leaders want AI in two places at once.

- Where interpretation can be standardized.

- Where throughput can be stabilized.

That mix is a clue that “AI as a partner” is not only about diagnosis. It is also about operational resilience.

What is really holding labs back: systems, trust, or rules?

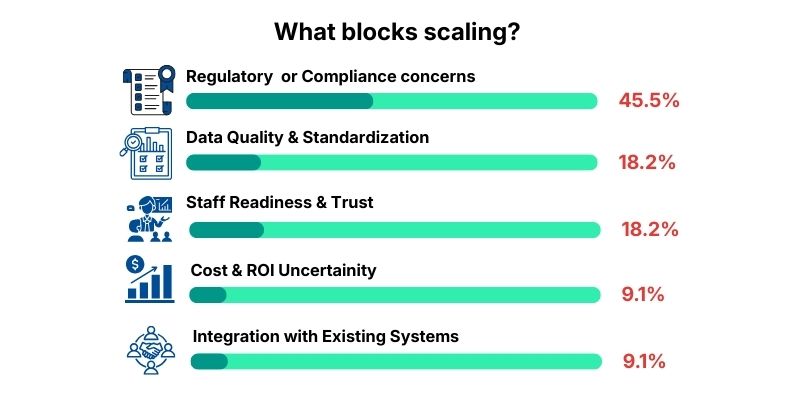

What is interesting is that value shows up in both diagnostics and operations. But value alone does not scale. The moment AI touches anything that can be audited, challenged, or questioned later, labs start asking a different question: what slows this down in the real world?

Here, the leading limiter is regulatory or compliance concerns (45.5%). A second tier emerges at data quality and standardization (18.2%) and staff readiness and trust (18.2%). Cost and ROI uncertainty (9.1%) and integration with existing systems (9.1%) appear, but lower.

The deeper insight: adoption stalls less on capability and more on accountability. Labs do not need AI to be clever. They need AI to be governable.

“We can pilot tools, but scaling means being able to explain decisions under audit-level scrutiny.”

A. Rivera, MDForLives Panelist, Laboratory Manager

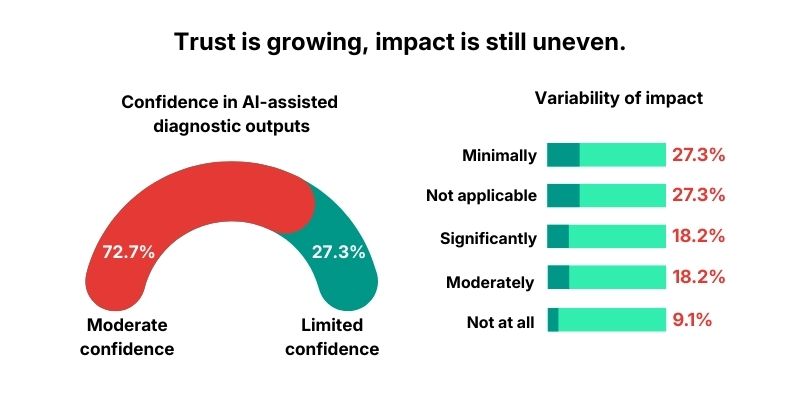

If confidence is “moderate,” what does that mean in practice?

And that is why adoption and trust are not the same chapter. Even labs that like the idea of AI still need to decide whether they believe the output enough to lean on it.

In this pulse, 72.7% report being moderately confident in AI-assisted diagnostic outputs, while 27.3% report limited confidence.

The insight is subtle but important: moderate confidence is not endorsement. It often means AI is acceptable as support, not acceptable as the final word.

Can AI reduce diagnostic variability without eroding trust?

But confidence is only half the story. In labs, the test is not whether AI sounds right. The test is whether it reliably changes outcomes, especially the one leaders care about most: reducing subjectivity and variability.

The signal here is mixed:

- Minimally (27.3%)

- Not applicable (27.3%)

- Significantly (18.2%)

- Moderately (18.2%)

- Not at all (9.1%)

The insight: many labs are either not using AI in the exact place where interpretation variability is decided, or they are not ready to credit AI for that reduction yet. AI may be present, but not yet positioned as the stabilizer.

If AI could “decide” operationally, where would you trust it first?

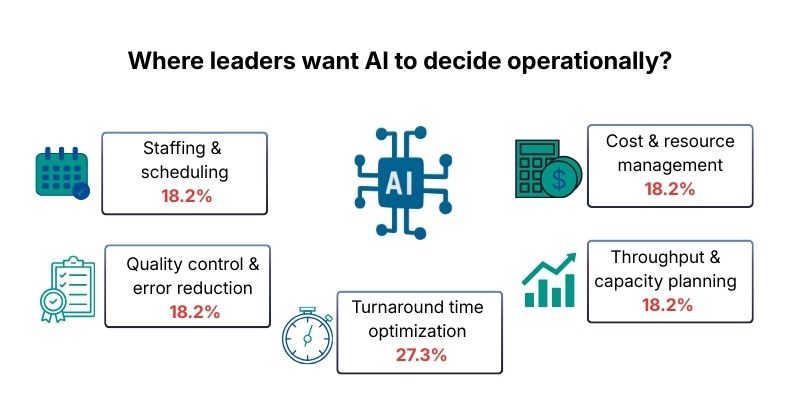

When variability reduction is uneven, leaders often pivot to a safer entry point: operations. It is easier to trial AI where it can improve flow without touching clinical judgment directly.

Operational priorities are distributed, but one leads: turnaround time optimization (27.3%). Several others cluster closely at 18.2% each: staffing/scheduling, quality control/error reduction, throughput/capacity planning, and cost/resource management.

The insight: labs are not waiting for one “AI brain.” They are looking for small, reliable decision engines that remove friction without introducing new risk.

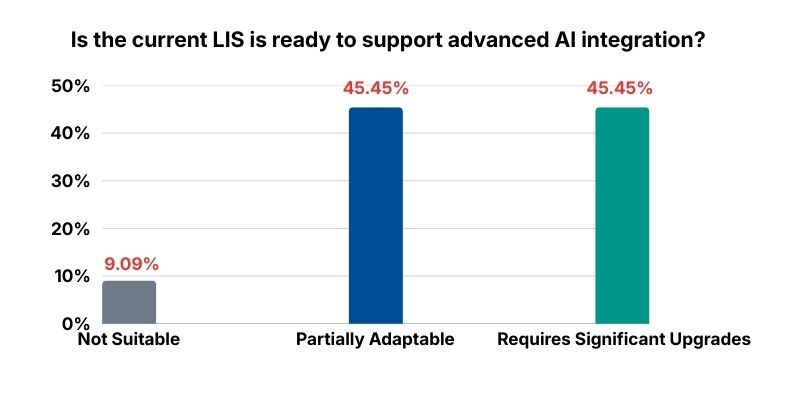

Is LIS readiness quietly limiting AI more than anyone admits?

Operational AI still needs plumbing. Even the best model becomes fragile if the workflow cannot integrate it cleanly or audit it properly.

Here, the readiness signal is telling: 63.6% describe their LIS as partially adaptable, and 36.4% say it requires significant upgrades.

The insight: many AI strategies fail quietly at integration. Not because the model is weak, but because the system cannot carry the weight of audit trails, orchestration, and clean handoffs between human and machine.

Are governance and security concerns slowing AI more than regulation itself?

And even if the LIS can stretch, there is still a second gate that labs do not compromise on: data governance and security.

In this pulse, 72.7% call governance and security a major limiting factor, while 27.3% describe it as a moderate concern.

The insight: the real fear is not innovation. It is loss of control. Once AI begins influencing decisions, labs need to answer questions about data custody, auditability, and accountability with the same rigor they apply to results.

Will AI become central to lab operations, or expand selectively?

That pressure shapes how leaders see the next few years. Instead of big leaps, many plan in controlled steps.

In this pulse, 81.8% expect AI to expand selectively over the next 2 to 3 years. Smaller shares expect it to remain niche (9.1%) or feel uncertain (9.1%).

The insight: “selective expansion” is not lack of ambition. It is how labs protect reliability while they learn what AI can safely own.

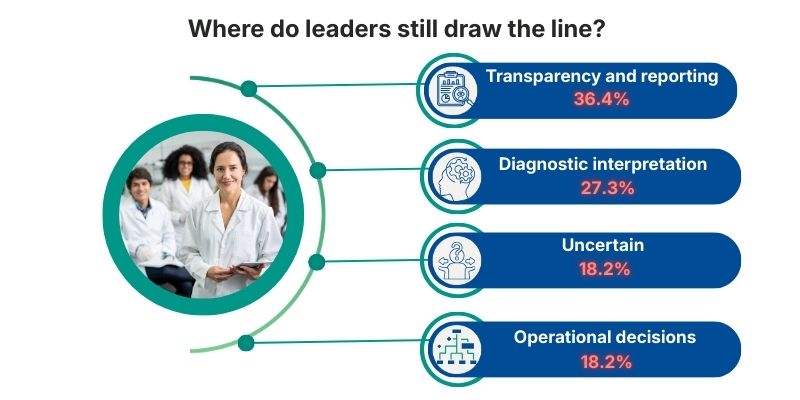

What decisions do lab leaders still refuse to delegate to AI?

Selective expansion still runs into one last line that leaders protect carefully. When decisions affect interpretation, sign-out, or accountability, the lab tends to pause.

The top hesitation theme is diagnostic interpretation and grading (27.3%). Next is data governance and transparency (18.2%) and result validation and reporting (18.2%). Another 18.2% remain unsure.

The insight: the closer AI gets to sign-out, the more it stops being technology and becomes responsibility.

Quiet synthesis: What this pulse suggests about “AI as a partner”

By the time the lab day is fully moving, decisions do not arrive as announcements. They arrive as prompts. A flagged exception. A suggested rerun. A priority reorder.

That is why the shift matters. AI is no longer just speeding up steps. It is starting to influence which steps happen next.

This pulse shows a clear pattern: labs are open to AI, but they want it governable first. Confidence is present, but delegation is conditional. Governance, security, and LIS readiness decide what scales, not curiosity.

So the next phase will not be autonomy overnight. It will be selective expansion, use case by use case, with a firm boundary around sign-out decisions.

When the system is confident and the stakes are real, the question that remains is simple: Who owns the call?

Frequently Asked Questions

Are clinical labs ready for AI beyond automation?

Many labs are already evaluating or piloting AI, with a meaningful share deploying it across workflows. Readiness varies because labs require governance, integration, and accountability before scaling.

Where does AI add the most value today in lab settings?

Leaders point to image analysis and diagnostics as the strongest immediate value, with operational use cases like scheduling, triage, and validation also ranking high.

What is holding labs back from scaling AI adoption?

Regulatory and compliance concerns lead, followed by data quality and staff readiness. Underneath these is a core need: AI must be auditable and accountable.

How confident are leaders in AI-assisted diagnostic outputs?

Confidence is largely moderate, which usually translates to AI as support rather than a final decision-maker.

Does AI reduce subjectivity and variability in interpretation?

The impact is mixed. Many report minimal reduction or say it is not applicable in their workflow yet, suggesting AI is not consistently placed where variability is decided.

Is LIS infrastructure limiting AI potential?

Yes. Many LIS environments are only partially adaptable or require significant upgrades to support advanced AI integration and auditability.

What decisions are labs most hesitant to delegate to AI?

Diagnostic interpretation and grading lead hesitations, followed by governance and sign-out related steps like result validation and reporting.

Hope this insight added value.

Participate in the survey below and add your voice to the conversation.

About Author : MDForlives

MDForLives is a global healthcare intelligence platform where real-world perspectives are transformed into validated insights. We bring together diverse healthcare experiences to discover, share, and shape the future of healthcare through data-backed understanding.